Google’s PageRank Algorithm – from the time it was patented in 2001 to when it expired in June 2019, we’ve gone from doing 30 million searches a day against 55 gigabytes of data, to doing 5.6 billion searches a day against 2 trillion gigabytes of data!

It appears that Google’s PageRank algorithm has expired: https://patents.google.com/patent/US6285999B1/en

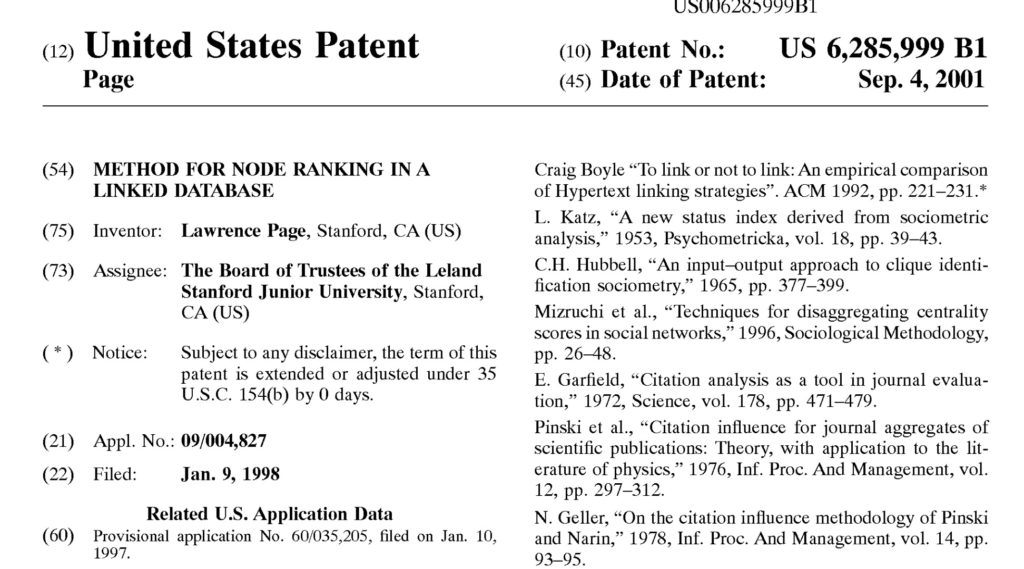

Lawrence Page, one of the co-founders (with Sergei Brin) of Google, developed the PageRank algorithm in 1997. On January 9, 1998 Brin filed a patent application, and on September 4, 2001 the patent was granted. Notably, the patent expired on June 4, 2019.

The patent is available at https://patentimages.storage.googleapis.com/37/a9/18/d7c46ea42c4b05/US6285999.pdf

The Abstract provided as follows:

A method assigns importance ranks to nodes in a linked database, such as any database of documents containing citations, the World wide web or any other hypermedia database. The rank assigned to a document is calculated from the ranks of documents citing it. In addition, the rank of a document is calculated from a constant representing the probability that a browser through the database will randomly jump to the document. The method is particularly useful in enhancing the performance of Search engine results for hypermedia databases, such as the World wide web, whose documents have a large variation in quality.

The patent also included the following interesting paragraph:

Currently, a popular search engine might execute over 30 million searches per day of the indexable part of the web, which has a size in excess of 500 Gigabytes. Information retrieval systems are traditionally judged by their precision and recall. What is often neglected, however, is the quality of the results produced by these search engines. Large databases of documents such as the web contain many low quality documents. As a result, searches typically return hundreds of irrelevant or unwanted documents which camouflage the few relevant ones. In order to improve the selectivity of the results, common techniques allow the user to constrain the scope of the search to a specified subset of the database, or to provide additional search terms.

This was from September 2001. As of June 2019, according to … Google (!) there are about 63,000 searches per second or 5.6 billion searches per day. And how big is the indexable part of the web? In 2001 it was 500 gigabytes. According to Cisco, as of June 2019 the indexable part of the Web is about 2 zettabytes, which is 2,000 exabytes, which is 2 million petabytes, which is 2 billion terabytes, which is 2 trillion gigabytes.

So … from the time Google’s PageRank algorithm was patented in 2001 to when it expired in June 2019, we’ve gone from doing 30 million searches a day against 55 gigabytes of data, to doing 5.6 billion searches a day against 2 trillion gigabytes of data. If only our mobile devices were as resilient as Google’s algorithms!